Greetings from Berkeley, where we’ve recently welcomed a merry band of cryptographers for what promises to be an outstanding summer program on...

We’re delighted to share that Miller fellow and Simons Institute Quantum Pod postdoc Ewin Tang has been awarded the 2025 Maryam Mirzakhani New...

This month, we held a joint workshop with SLMath on AI for Mathematics and Theoretical Computer Science. It was unlike any other Simons Institute...

Greetings from Berkeley, where an exciting semester is drawing to a close. This week we say goodbye to the participants in our fall research programs on Logic and Algorithms in Database Theory and AI, and on Data Structures and Optimization for Fast Algorithms. We will resume activities early in the new year, with synergistic spring programs on Error-Correcting Codes and on Quantum Algorithms, Complexity, and Fault Tolerance.

Some of the richest and most lasting mathematical ideas come about when two distinct fields come together and pay close attention to their intersections. That was the goal of the workshop on Structural Results held at the Simons Institute in July 2023, in which extremal combinatorists and theoretical computer scientists specializing in complexity convened to talk about the overlap between their two fields.

The Simons Institute’s ninth Industry Day was our largest to date, with over 150 attendees from the Institute, the broader UC Berkeley campus, our partner and sponsor companies, and beyond. The event, which took place on November 2, was designed to facilitate knowledge-sharing among industry and academic scientists, and to highlight the importance of industry partners in supporting research in the foundations of computing.

In his talk at the Simons Institute’s ninth annual Industry Day, Alon Halevy (Meta, Reality Labs Research) explored the use of AI technology for promoting personal well-being.

Greetings from Berkeley. With the holidays approaching, the final workshops of each of the fall semester programs are upon us, with the workshop on Logic and Algebra for Query Evaluation taking place this week, and a workshop on Optimization and Algorithm Design scheduled for the week after Thanksgiving.

In his presentation in our Theoretically Speaking public lecture series, Leonardo de Moura (AWS) described the Lean proof assistant's contributions to the mathematical domain, its extensive mathematical library encapsulating over a million lines of formalized mathematics, its pivotal role in cutting-edge mathematical endeavors such as the Liquid Tensor Experiment, its impact on mathematical education, and its role in AI for mathematics.

In the opening talk from the Simons Institute’s recent workshop on Online and Matching-Based Market Design, Paul Milgrom (Stanford) introduced fast approximation algorithms for the knapsack problem that have no confirming negative externalities, and guarantee close to 100% for both allocation and investment.

In her Richard M. Karp Distinguished Lecture, Monika Henzinger (Institute of Science and Technology Austria) surveyed the state of the art in dynamic graph algorithms, the different algorithmic techniques developed for them, and all the questions in the field that still await an answer.

The Simons Institute for the Theory of Computing has received a $300,000 grant from the UC Noyce Initiative to hold a research program on Cryptography in Summer 2025, and a Department of Energy sub-award of $1.2 million in support of the Institute’s Research Pod in Quantum Computing.

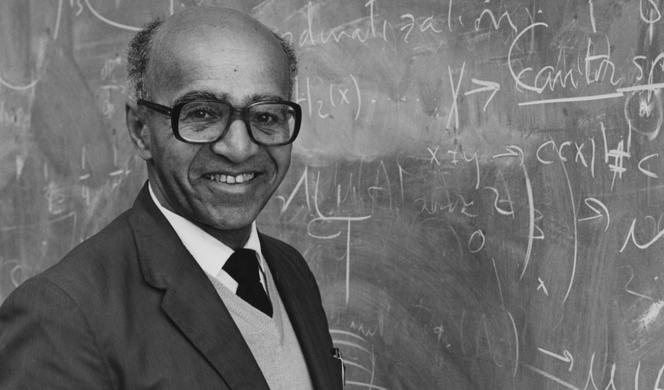

The theorem goes by many names, as researchers have discovered and rediscovered it in different contexts. Some call it the experts’ theorem: for example, given two recommendations from two experts to buy two different stocks, the theorem lays out a method to combine the two recommendations that will perform almost as well as the best of these two experts. For this story, we will call it Blackwell’s approachability theorem — to highlight David Blackwell, the UC Berkeley mathematician who proved it and published it in 1956.