Speaker 1: Kevin Tian, Stanford University

Title: Robust Regression Revisited: Acceleration and Improved Estimation Rates

Abstract: We study fast algorithms for statistical regression problems under the strong contamination model, where the goal is to approximately optimize a generalized linear model (GLM) given adversarially corrupted samples. Prior works in this line of research were based on the robust gradient descent framework of Prasad et. al., a first-order method using biased gradient queries, or the Sever framework of Diakonikolas et. al., an iterative outlier-removal method calling a stationary point finder. We present nearly-linear time algorithms for robust regression problems with improved runtime or estimation guarantees compared to the state-of-the-art. For the general case of smooth GLMs (e.g. logistic regression), we show that the robust gradient descent framework of Prasad et. al. can be accelerated, and show our algorithm extends to optimizing the Moreau envelopes of Lipschitz GLMs (e.g. support vector machines), answering several open questions in the literature. For the well-studied case of robust linear regression, we present an alternative approach obtaining improved estimation rates over prior nearly-linear time algorithms. Interestingly, our method starts with an identifiability proof introduced in the context of the sum-of-squares algorithm of Bakshi and Prasad, which achieved optimal error rates while requiring large polynomial runtime and sample complexity. We reinterpret their proof within the Sever framework and obtain a dramatically faster and more sample-efficient algorithm under fewer distributional assumptions. Joint work with Arun Jambulapati, Jerry Li, and Tselil Schramm.

Speaker 2: Yuetian Luo, University of Wisconsin-Madison

Title: Computational Limits for Tensor Clustering with Planted and Block Structures

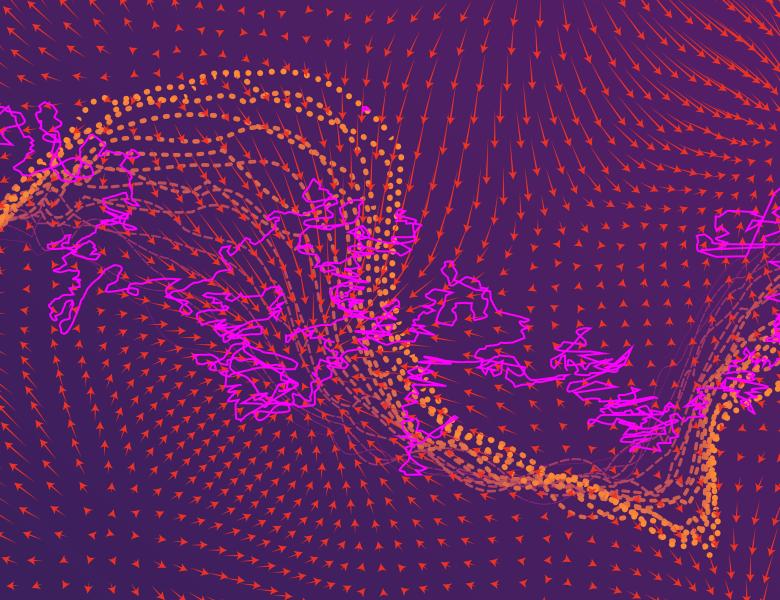

Abstract: In this talk, we consider two types of tensor clustering problems with planted and block structures. For tensor clustering with planted structures, we further consider two clustering models, constant high-order clustering (CHC) and rank-one higher-order clustering (ROHC).

For CHC and ROHC, we consider testing whether a cluster exists (detection) and identifying the support of cluster (recovery). We identify the sharp statistical and computational limits under these two models. The computational lower bounds are established based on the average-case reduction assuming the computational hardness conjectures of hypergraphic planted clique (HPC) detection and hypergraphic planted dense subgraph (HPDS) recovery. Both sparsity and tensor structures yield the computational barriers in high-order tensor clustering. The interplay between them results in significant differences between high-order tensor clustering and matrix clustering in literature in aspects of statistical and computational phase transition diagrams, algorithmic approaches and hardness conjectures. The results give the first thorough characterization of the statistical and computational trade-offs for a problem with double computational barriers. In addition, we provide evidence for the computational hardness conjectures of HPC detection (via low-degree polynomial and Metropolis methods) and HPDS recovery (via low-degree polynomial method).

For tensor clustering with block structures, we consider recovering the membership vectors along each mode. The computational limit is established via reducing to ROHC and matching efficient algorithms based on high-order spectral initialization with Lloyd refinement is also provided. (Joint work with Rungang Han, Miaoyan Wang and Anru Zhang.)

All scheduled dates:

Upcoming

No Upcoming activities yet