Abstract

Inference problems with conjectured statistical-computational gaps are ubiquitous throughout modern statistics, computer science and statistical physics. While there has been success evidencing these gaps from the failure of restricted classes of algorithms, progress towards a more traditional reduction-based approach to computational complexity in statistical inference has been limited. Existing reductions have largely been limited to inference problems with similar structure -- primarily mapping among problems representable as a sparse submatrix signal plus a noise matrix, which are similar to the common hardness assumption of planted clique.

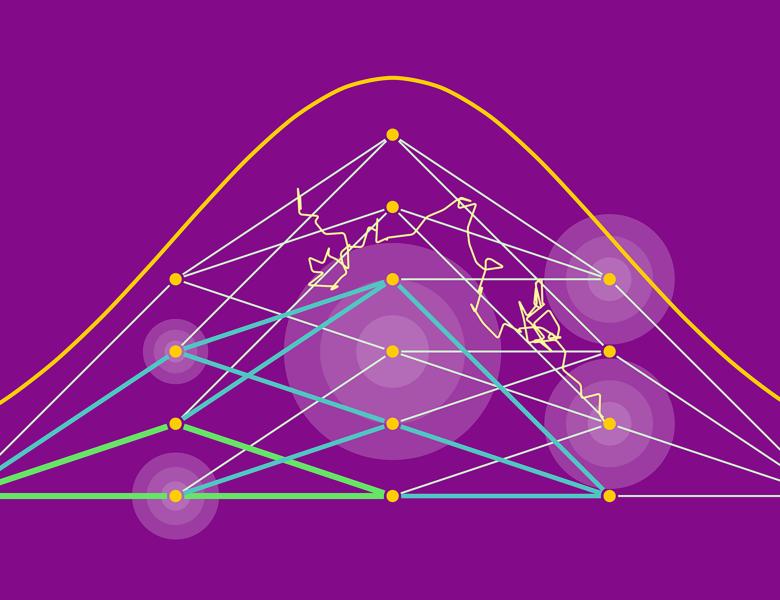

The insight in this work is that a slight generalization of the planted clique conjecture -- secret leakage planted clique -- enables the success of a variety of new average-case reduction techniques, yielding a web of reductions among problems with very different structures. Using variants of the planted clique conjecture for specific forms of secret leakage planted clique, we deduce tight statistical-computational tradeoffs for a diverse range of problems including robust sparse mean estimation, mixtures of sparse linear regressions, robust sparse linear regression, tensor PCA, variants of dense k-block stochastic block models, negatively correlated sparse PCA, semirandom planted dense subgraph, detection in hidden partition models and a universality principle for learning sparse mixtures. Joint work with Matthew Brennan.